Edition 33 - The role of AppSec engineers is moving from being carpenters to gardeners

I don't think "AppSec is dead", but the role of AppSec engineers is certainly changing

Tis the season of existential dread. Everyone in tech is wondering if their job will exist in the next few years. If AI can write all the code, do we need developers? If AI can write Terraform and deploy, do we need DevOps? If AI can write this blog post, do we really need authors and so on?

If you lead a team, this dread compounds outside your immediate role, too. Should I hire experienced folks who can tell the AI what to do? Should I hire smart folks with no experience, as they have “nothing to unlearn”, and so on?

In my recent conversations, this dread has reached the AppSec team too. Every 3rd day, you’ll see a launch that says you can automate something you did manually. SAST became SAST+AI (SAST tools with AI features for triage), then became AI-powered SAST (SAST that uses AI to discover business-logic findings), and finally became a button in Claude (eliminating SAST as a step in the SDLC). While the current state of these tools is debatable (I’ve written about this here), the direction is clear. Much of what constitutes a “security assessment” will be automated by AI agents. We don’t yet know who will do it (existing security companies, foundation model companies, or new startups), but it’s gonna happen!

I’ve seen this play out within Seezo, too. What started as an experiment to automate parts of Security Design Review has now reached a point where most of the heavy lifting is done by the product. Humans are still involved in reviewing results, but their role diminishes with each new model drop and platform improvement.

If it’s inevitable that AI agents will do most of the security assessment work (scanning, triaging, and communicating), then what’s the role of the AppSec engineer? Do we even need an AppSec team?

With my own experience using AI as an end user and building an AI-powered product, it’s clear to me that the AppSec team will remain. But their role will change.

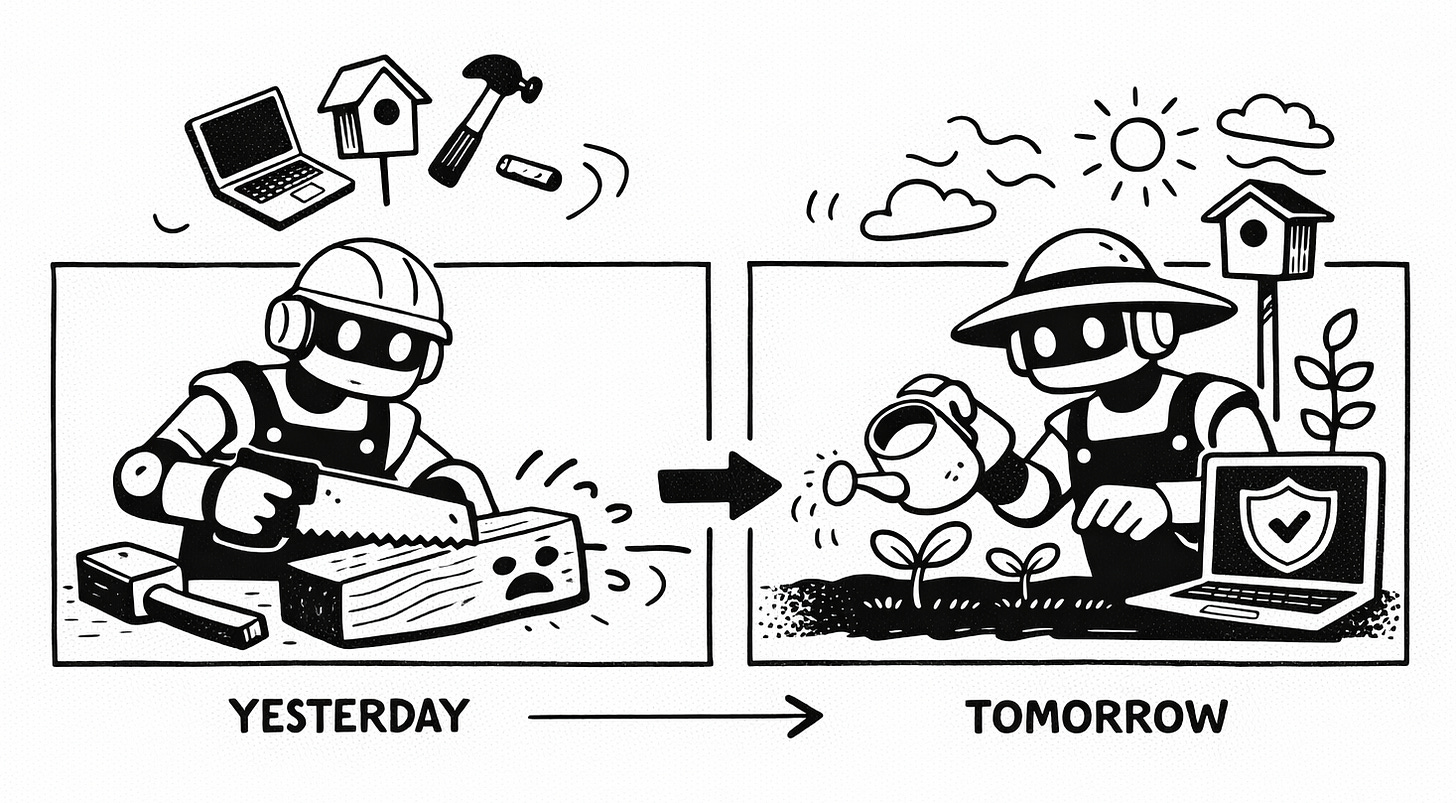

From Carpenter to Gardener

When Pooja (my partner) and I were expecting our daughter, we turned into one of those nervous-to-be parents who wanted to read everything about parenting. We were surrounded by books, subscribed to parenting newsletters, and so on. We were the “research” parents for a while (a story for another day, but that phase ended, and we switched to an “instinct-led approach” pretty soon). In this phase, one framework strongly influenced our thinking, and we have tried to apply it to this day. The framework by Alison Gopnik suggests that parenting is more about being a gardener than a carpenter.

Carpenters take a block of wood and “make” a chair out of it. Every little detail is handled by the carpenter. Gardeners are different. They water the plants, provide fertilizers, and ward off weeds, but they “let” the plants grow. The book (and the many articles by the author) emphasized this approach.

Merits of the parenting framework aside (you could argue both sides of which approach is better), when I think about how AppSec is changing, I feel like we have been moving away from carpentry to gardening for a while now, and AI accelerates that trend significantly.

We have gone from “doing the security assessment” to “taking the tool’s help to do the assessment”, to “configuring the tool that does the assessment and then triage results”. The next stage is simple. The entire assessment will be done end-to-end by AI agents: configuring, scanning, triaging, and communicating.

But it’s clear to me (building an AI product and using AI extensively as a daily driver), that the quality of results from AI agents depends on the quality of the agent, the quality of the underlying foundational model *and* the context provided to the agent. The 3rd part is not something you can buy from a SaaS tool. AppSec teams have to build this themselves.

What does “gardening” in AppSec look like?

To break it down, even in the optimistic scenario of AppSec Agents being amazing at security assessments, there will be 3 things AppSec engineers will still have to do:

1. Define the workflow: When should SAST run? Who should receive the results? When should a human review results? What should trigger a pipeline block? These are questions your AI agents cannot answer, cos there is no “right” answer and the correct thing to do depends on your org’s security and technology culture. Depending on which product/BU/team you are working with, you may even need different workflows for different teams. While you may have tooling to orchestrate your AppSec agents, defining and tweaking the workflow will still be the AppSec team’s job. In some cases, you may outsource this to the dev team (e.g., Via Security Champions), but AppSec teams still need to own this.

2. Supplying context: This will probably be the most time-consuming and hardest to define aspect of an AppSec team’s job. It’s clear to me that the better context you provide an agent, the better results it provides. So, what information do you need to supply to your API Security Agent so it actually knows your rate-limiting requirements for internal APIs? What are the secure-by-default patterns that a Security Design Review tool should recommend? This problem is harder than it meets the eye because context does not lie in one place. It’s spread across “sources of truth” (such as code and deployments) and “sources of intent” (security standards document, PRDs, etc.). Depending on how your company operates, AppSec teams need to provide the right context to the right agents to extract the best values. Provide too much context, and you fill up the context window with junk. Provide too little and your AppSec agents give you generic crap.

3. Be the human in the loop and treat each instance of it as an agent failure: For the foreseeable future, AI agents running these assessments will still need human help. They will need to validate some results and require human review for certain kinds of changes. Hopefully, over time, the percentage of items that need human review goes down. Until then, we will need AppSec engineers to review the results, add more context, and decide what to do with the output. I think a useful frame for looking at this is to treat each human-in-the-loop interaction as a failure on the agent's part. In addition to resolving whatever needs to be resolved, the human should also “teach” the agent how to handle similar situations in the future. This could mean persisting information in a context file (e.g., Claude.md), writing a skill/sub-agent to handle a particular type of scenario, and so on. A good measure of an Agent's success would be the accuracy of its results and how often humans needed to be involved.

Note: 2 & 3 are somewhat related. While “context” may be something we add before an assessment starts, “committing things to memory” is also important in response to how Agents react. If a false positive recurs across different agent runs, it’s important to commit to memory why it is a false positive and how the agent can handle it better. In a way, these are 3 distinct activities, but also a loop that feeds into each other and improves over time.

This is a big change

If an AppSec engineer slipped into a coma in 2015 and woke up to *this* reality, they’d be unable to recognize the role. This change will not be easy to make for everyone. What’s worse, there isn’t enough tooling built to support these behaviors. Security vendors have spent decades figuring out the best UX for triaging results (and we haven’t perfected it), but no one knows what the best UX for “providing context” is. Defining Security Standards and Security Workflows used to be something you did once a year. Now things have to happen very quickly. This change will bring collateral damage. Depending on the organizational context, some companies may have already made this change, while others may take many years to do so. If you are taking on a new role in AppSec, I’d urge you to understand where on the spectrum of this change the team lies and if that is a good fit for you. To be clear, I don’t think of this change as a simple “maturity curve”. It’s not necessary that teams that haven’t adapted this are less mature (although that’s one possible explanation); it may also be an indication of how software is built in the company, what industry the company belongs to (some industries will take longer to undergo an AI transformation, and rightly so).

Where are you on the Spectrum?

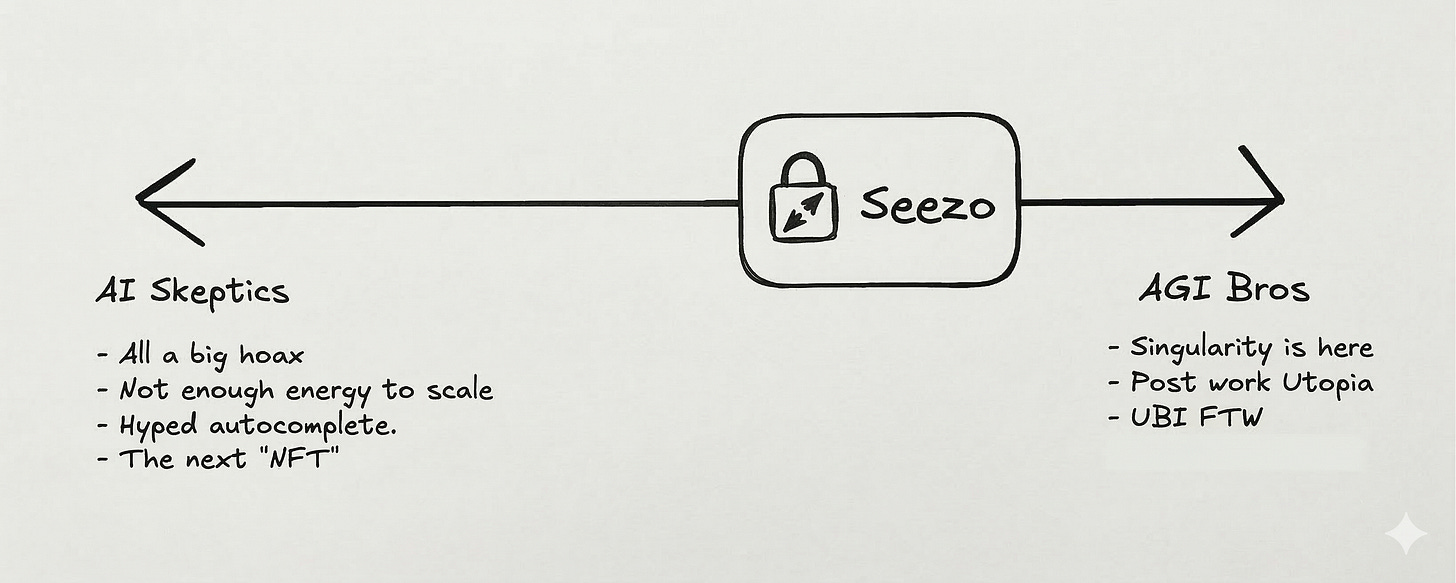

In an internal meeting at Seezo, I half-joked that we need to be all on the same range of the “AI adoption spectrum” (see below). Irrespective of where you lie on the spectrum, it’s important to work with a team that is adjacent to your position. If you are an AI Skeptic in an AI-techbro team, you are gonna struggle. If you are cautiously optimistic about AI, but your company won’t use it until the “technology is mature”, you are gonna be frustrated.

That’s it for today. Does the Carpenter v/s Gardener analogy land, or am I being crazy by mapping AI to the one book I read many years ago? Are there other frameworks that help you navigate this crazy change? Hit me up! You can drop me a message on Twitter (or whatever it is called these days), LinkedIn, or email. I am also the co-founder of Seezo. We help companies automate security design reviews at scale. Check us out if that’s your thing :) If you find this newsletter useful, share it with a friend, colleague, or on social media.