Edition 32: BigCo is building in AppSec, but it's too early to get excited

OpenAI, Anthropic, Google Deepmind, GitHub, & AWS have announced AI-powered AppSec solutions. But should we get ready to switch?

Before we begin…

Happy New Year! As some of you may have noticed, we have made a few exciting changes to Boring AppSec. Nothing changes for this newsletter, but you can now access all episodes of the BoringAppSec Podcast here. We also have Anshuman, bring his sharp thoughts on AI & Security to the Boring AppSec Platform here. Finally, we have a Slack community where readers and authors of Boring AppSec hangout. Come join us if that’s your thing!

2025 was a year of breakneck speed in AI, but one trend mildly surprised me: Frontier labs and hyperscalers actively building AppSec tools.

After decades of yelling from the rooftops about AppSec's importance, it looks like the tech industry is finally paying attention. Over the holidays, I dug deep to understand what this means for our industry. For now, I think the real impact is not that we have better AppSec tools (we don’t), but it gives us a peek into what’s coming next.

Here are a few thoughts:

1. Most of what we saw in 2025 from BigCo was demoware.

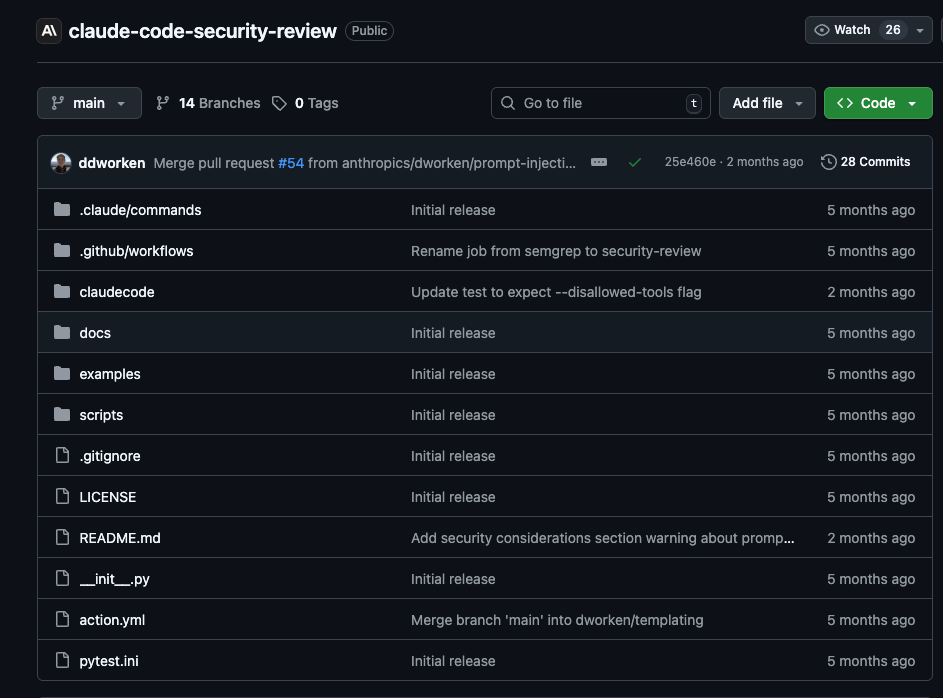

Aardvark launched 3 months ago and is still in private beta. In that time, OpenAI has shipped multiple models, released many new versions of Codex, and much more. A few weeks before this, Anthropic launched the “security review” command within Claude Code and a companion GitHub Action to review PRs. An elegant solution on top of the mighty impressive Claude Code application. But security-review.md hasn’t been updated in 5 months. In that same window, Anthropic released multiple new models, took the code gen world by storm, and is threatening to do the same for non-engineers with Claude CoWork.

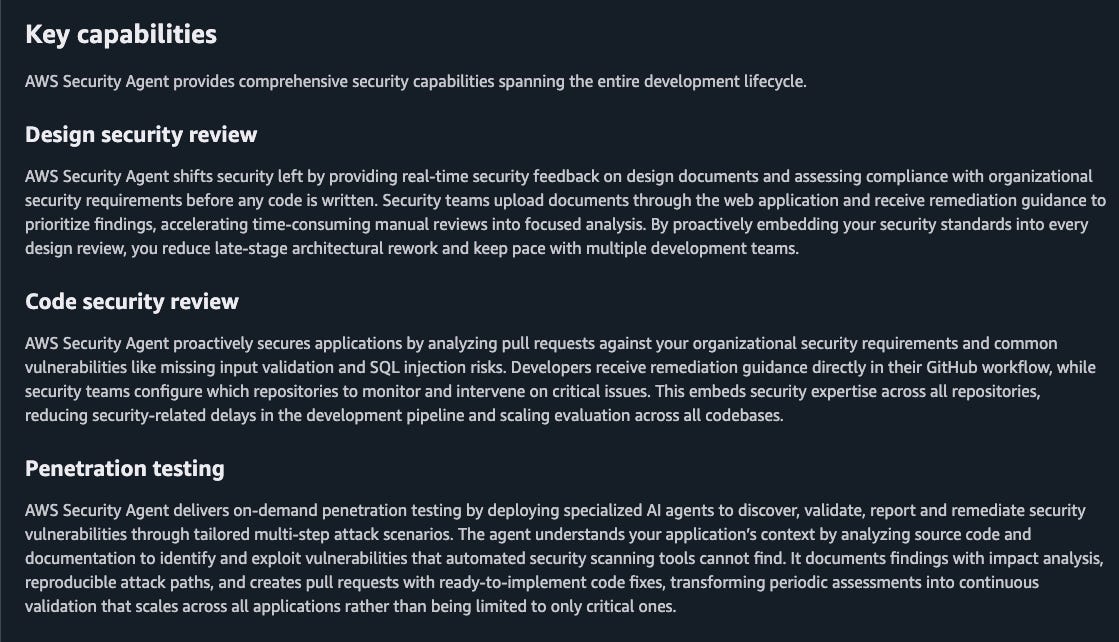

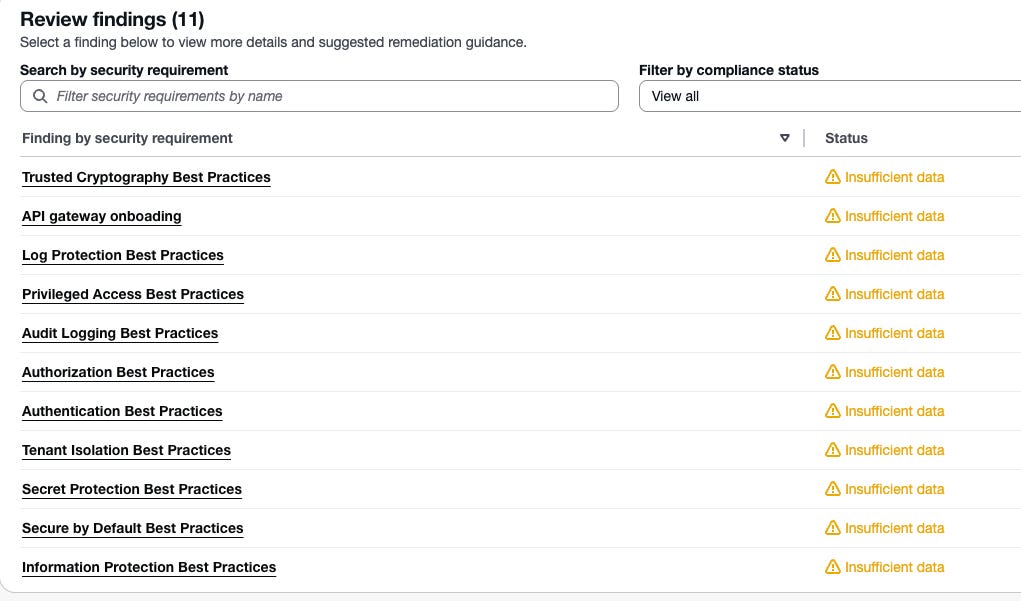

AWS’s Security Agent promises to automate Security Reviews, SAST, and Pen Testing. I tested a few of these tools and found them underwhelming compared to what these teams are capable of.

These companies have insanely talented teams. The effort on what’s shipped so far leads me to believe the goal was not to build world-class AppSec products, but to demonstrate capability. Show what’s possible with frontier models rather than grow revenue with AppSec tools.

2. This complicates things for AppSec teams.

If I had a nickel every time someone asked me, “But won’t Cursor replace AppSec?”, I’d be a rich man. AppSec teams are probably hearing the same from their CFOs: why spend $$ on SAST tools when Claude can do it? I hear you can just “vibe code” software now, why not build it in-house? Why go through procurement hell when AWS has a free option?

These are valid questions. But notice what happened: the burden of proof just shifted to the AppSec team. They now have to prove why a dedicated security vendor is better than the behemoths. I wouldn’t blame anyone for invoking the old “nobody gets fired for buying IBM” adage and giving in. Others will do the work to show these tools aren’t ready. Either way, AppSec teams are stuck with a bad trade-off: accept the demoware to keep the peace, or spend time fighting a battle they shouldn’t have to fight.

3. I don’t blame the labs for this.

LLMs are generating more code than ever. More code means more vulnerabilities. But it also means the bottleneck has shifted. Writing code is no longer the constraint; reviewing it is. Security reviews included. The labs know this, and they’re trying to get ahead of it.

This isn’t new. Every major technology shift creates security problems, and the companies closest to the shift usually take a first crack at solving them. Cloud created misconfiguration hell, so AWS built GuardDuty. LLMs are creating insecure code at scale and overwhelming review capacity, so the labs are building AppSec tools.

4. What does this mean for AppSec vendors?

Probably not as much as you’d think. GitHub has 100M+ developers, native workflow integration, and Microsoft’s backing. They’ve had GHAS for years. And yet Snyk and Semgrep are thriving. AWS built GuardDuty, and Wiz still became one of the fastest-growing security companies ever.

Why? Security isn’t a winner-take-all market. I don’t want to beat the platform v/s point-solution drum again, but history tells us both survive. And while it’s tempting to go “AI changes things”, I am not sure how.

5. 2026 may be different.

Even if their attempts in 2025 were feeble, there are signs the labs are getting serious. Anthropic recently hired a SentinelOne product executive to lead cybersecurity products. OpenAI has researchers working on Aardvark. Job listings hint at roadmaps with a higher focus on Cybersecurity products. I wouldn’t be surprised if we see 1-2 credible AppSec products from these labs in the next 12-18 months. But if history is any indication, AppSec products of all kinds (from labs, startups, old school players) will continue to thrive, while analysts and bloggers continue the pointless platforms v/s point solutions debates :P

That’s it for today! Are you an AppSec professional who has been asked the “but won’t Claude kill AppSec” question? Do you think what we have today from the labs is more than just demoware? How are you leveraging AI to scale AppSec? Let me know! You can drop me a message on Twitter (or whatever it is called these days), LinkedIn, or email. I am also the co-founder of Seezo. We help companies automate security design reviews at scale. Check us out if that’s your thing :) If you find this newsletter useful, share it with a friend, colleague, or on social media.

This piece really made me think. Your insight that BigCo's AppSec tools are mostly demoware, despite the AI hype, resonates deeply. It's a pattern you've highlighted before with how progress is often slower than it seems. As an AI enthusiast, I truely appreciate your clear, critical perspective here.